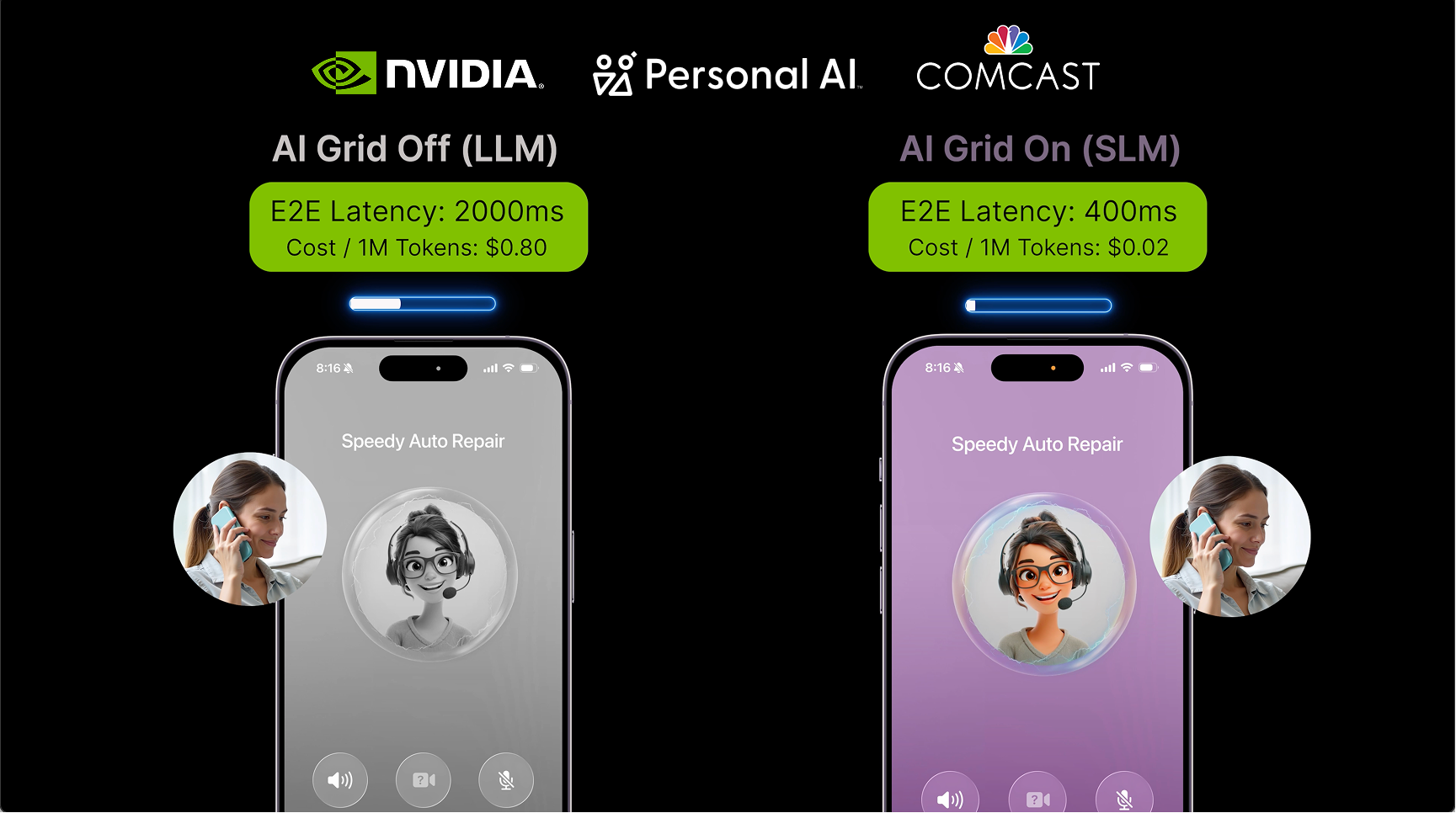

SAN JOSE, Calif., March 17, 2026 (GLOBE NEWSWIRE) -- Personal AI today announced a strategic collaboration with Comcast at NVIDIA GTC to advance the AI Grid, a new framework that leverages the network edge to distribute AI workloads and accelerate next-generation application delivery. Personal AI’s memory-based Small Language Models, deployed in Comcast’s AI Grid trials, deliver faster response times, higher concurrency, user-specific memory, and lower operating costs for real-time, hyper-personalized voice applications.

Source: https://youtu.be/GI9ui916O7w

Comcast’s hyper local network infrastructure, which reaches more than 65 million homes and businesses across the U.S., is well positioned to put computing power closer to customers, creating one of the largest platforms in the U.S. for delivering real-time AI inference. Trials conducted at Comcast’s headquarters in Philadelphia validated Personal AI’s Grid-ready architecture, with initial results establishing the technical and economic viability of distributed inferencing for AI-native services.

"With the AI ecosystem rapidly evolving, there is a growing role for distributed compute to play in running real-time Edge AI applications that unlock faster, smarter and more responsive experiences for consumers and businesses,” said Elad Nafshi, Chief Network Officer at Comcast. “Smaller, more personalized models that are hyper-tuned for specific tasks will enable the next wave of AI and our trials with Personal AI demonstrate that memory-driven SLMs on our distributed network can deliver performance benchmarks that generic models cannot match."

Bringing Intelligence Beyond the Cloud

Traditional cloud-based LLM architecture faces structural limitations in latency, cost, and privacy when applied to real-time, user-specific applications at scale. The AI Grid approach addresses these challenges by distributing inference workloads across network infrastructure, including centralized data centers, regional hubs, and edge sites.

With memory embedded in the models themselves, Personal AI’s SLM technology reduces the need for context-heavy LLMs, using specialist models and a Memory Core to serve diverse cohorts of customers with models that are small enough to run on the edge and dynamic in order to maintain long-term and conversational memory over time. The combination of Personal AI’s SLMs and memory architecture allows operators to serve end-users with real-time, multi-modal, and hyper-personalized AI experiences at scale.

"AI without memory will not win in this market," said Suman Kanuganti, CEO and Co-Founder of Personal AI. "Memory is what turns AI into personal intelligence. By running memory-based Small Language Models at the edge of the network, we enable ‘AI of Things’ that remembers context and improves over time with interactions from the real world, all within latency and regulatory requirements.”

Accelerated by NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs, Personal AI’s Small Language Models allow operators to achieve latency, concurrency, and throughput performance that once existed only in cloud environments, and now can be operated closer to end users using network infrastructure.

“We're at a generational inflection point where AI and telecommunications are converging on the same infrastructure, creating a distributed edge AI network perfectly suited for inference," said Chris Penrose, Global VP - Business Development - Telco at NVIDIA. "What's important is computing every workload where it makes most sense in terms of cost and performance. The collaboration between Comcast and Personal AI demonstrates that SLMs running on an NVIDIA powered AI grid can meet the latency, accuracy, and cost requirements for real-time AI services at telecom scale”.

About Personal AI

Personal AI builds memory infrastructure for AI systems. The company's identity-based memory architecture powers specialized Small Language Models (SLMs) that deliver superior precision, latency, and cost efficiency compared to centralized cloud LLMs. By replacing context-window-based personalization with persistent, multi-layered memory, Personal AI enables real-time, personalized AI experiences at scale. The platform is purpose-built for distributed deployment on telecommunications infrastructure, supporting privacy-first, on-network AI integrated with voice, text, and data services. For more information, visit https://personal.ai or email press@personal.ai

A photo accompanying this announcement is available at https://www.globenewswire.com/NewsRoom/AttachmentNg/32647521-4a62-4ab5-9557-47c050d5c8b6